Silicon Valley newcomer Groq has successfully closed a substantial funding round, securing $750 million and achieving a significant valuation of $6.9 billion. This development marks a pivotal moment in the rapidly evolving landscape of artificial intelligence infrastructure. While major tech players have traditionally invested heavily in GPUs from industry leaders like Nvidia and AMD for AI training, a new wave of specialized startups, including Groq, is now attracting considerable capital and challenging established norms. Groq's focus on Language Processing Units (LPUs) for AI inference signals a potential shift towards more tailored hardware solutions in the AI sector.

Groq's Funding Success Reshapes AI Chip Market Dynamics

In a significant move reported on September 26, 2025, Groq, a Silicon Valley-based chip startup, announced a successful funding round of $750 million, valuing the company at $6.9 billion. This substantial investment comes at a time when major technology firms, including Amazon, Microsoft, Alphabet, and Meta, are consistently channeling vast amounts of capital into enhancing their artificial intelligence capabilities, predominantly through the acquisition of GPUs from industry titans like Nvidia and AMD, and networking components from Broadcom.

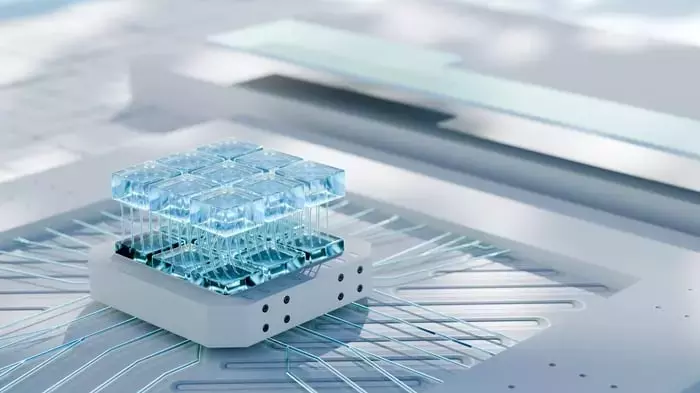

However, the recent capital injection into Groq, notably from investors such as Samsung, Cisco, and BlackRock, indicates a burgeoning interest in more specialized semiconductor solutions beyond the prevalent GPU paradigm. Groq's innovation lies in its development of Language Processing Units (LPUs), which are specifically engineered for AI inference workloads. This differentiates them from GPUs, which are primarily optimized for the intensive training phases of generative AI models.

The critical distinction between AI training and inference highlights a growing need for diverse chip architectures. Inference, the stage where trained AI models are deployed for real-world applications, demands chips that offer superior processing speed, enhanced power efficiency, and ultra-low latency—attributes that LPUs are designed to deliver. This specialization underscores the idea that a one-size-fits-all approach to AI semiconductors may no longer suffice as AI applications become more sophisticated and varied.

Groq's successful funding round is poised to impact market leaders like Nvidia and AMD. Nvidia currently holds an estimated 90% share of the AI accelerator market, a dominance built on its advanced GPU architectures and the robust CUDA software ecosystem. However, as AI workloads become increasingly fragmented, cloud hyperscalers might consider Groq's LPUs for their inference needs, potentially compelling Nvidia to adapt its strategy or risk ceding ground in crucial segments of the AI landscape.

For AMD, which has positioned itself as a cost-effective alternative to Nvidia, Groq's emergence could fundamentally alter the competitive narrative. A move towards multi-vendor platforms, away from sole reliance on Nvidia, could create new opportunities for AMD to expand its market presence. Therefore, Groq's rise not only challenges Nvidia but also broadens the competitive arena, empowering buyers with more choices and potentially driving further innovation across the industry.

The Future of AI Hardware: Specialization and Competition

The substantial investment in Groq signifies a pivotal shift in the AI chip sector, emphasizing the increasing importance of specialized hardware for diverse AI workloads. This trend suggests that while GPUs will remain crucial for AI model training, the demand for highly efficient inference chips like Groq's LPUs will grow. This diversification is healthy for the market, fostering innovation and competition. Companies like Nvidia, with their established ecosystem and financial strength, are well-positioned to adapt and continue leading, but they will need to remain agile and responsive to these evolving demands. For investors, this creates a dynamic landscape where understanding the nuances of AI hardware specialization becomes key to identifying future growth opportunities beyond the current market leaders.